DATE: 2026/05/12

Hot Topic | World Models vs. VLA Models: Where Is Embodied Intelligence Ultimately Headed?

In 2026, the embodied intelligence industry has once again become one of the hottest topics in AI and robotics.

On one side, world models are rapidly gaining momentum. From industry discussions to announcements by leading technology companies, world models are increasingly becoming central to narratives around Physical AI, robotics foundation models, synthetic data, and general-purpose robot intelligence.

At GTC, NVIDIA continued advancing technology stacks such as Cosmos, GR00T, and the Physical AI Data Factory, further establishing “world models” as an unavoidable keyword in embodied intelligence.

On the other side, VLA models are increasingly being questioned. Over the past few years, VLA—Vision, Language, Action—was widely regarded as the core paradigm for embodied AI models, connecting the full chain from robot perception and understanding to execution.

But as world models become the new focus, the industry has quickly begun asking difficult questions:

- Has the era of VLA already passed?

- Will world models replace VLA?

- Which path will embodied intelligence ultimately follow?

In the view of SEER Robotics, the most important aspect of this debate is not determining a winner between VLA and world models. Rather, it is a reminder of a deeper truth:

The “brain” of embodied intelligence has never been equivalent to any single large model.

VLA is important. World models are also important. But neither represents the complete answer.

A true robot brain will ultimately be a system-level capability jointly built upon models, control systems, closed-loop data, and real-world scenarios.

Why World Models Suddenly Matter

First, let’s look at world models.

According to the NVIDIA Glossary, a world model is a type of neural network capable of understanding the dynamic rules of the real world, including physical laws and spatial properties.

In the context of robotics, this is easy to understand: for robots to complete tasks in the physical world, they must not only recognize “what is in front of them,” but also predict “what will happen next.”

For example:

- If a cup is pushed to the edge of a table, will it fall?

- After a pallet is lifted, will the center of gravity shift?

- Will a robotic arm collide with surrounding obstacles during operation?

- Will a mobile robot lose path stability when turning in a narrow aisle under changing speed and load conditions?

These are not simply perception problems—they are physical prediction problems.

The core value of world models lies in giving robots the ability to internally simulate the physical world: predicting the consequences of actions before execution, reasoning about different outcomes, and generating synthetic data to supplement long-tail scenarios that are difficult to collect in the real world.

This directly addresses one of the biggest long-standing bottlenecks in embodied intelligence: the extreme scarcity of real-world physical interaction data.

Large language models can learn from massive amounts of internet text data, but robots cannot simply download millions of real-world grasping operations, warehouse obstacle-avoidance scenarios, or stable transport interactions under varying payloads.

Real-world factors such as friction, collision, occlusion, vibration, external disturbances, and execution errors all require repeated learning in either real environments or highly accurate simulations.

This is why the rise of world models is no surprise. They provide robots with imagination and predictive understanding of the physical world.

But World Models Are Not the Complete Robot Brain

At the same time, it is important to remain rational: no matter how powerful world models become, they are still not the complete answer.

✅ They improve a robot’s ability to predict physical outcomes, but they are not motion control systems.

✅ They help generate training data, but they do not automatically establish real-world data closed loops.

✅ They enhance generalization capabilities, but they cannot independently solve industrial challenges such as low-latency control, safety boundaries, hardware errors, and stable cross-scenario deployment.

In short:

World models may help robots “think” better, but they cannot independently guarantee that robots will “act” better.

Real industrial environments are never ideal simulation worlds. Cargo shifts, uneven floors, pallet deformation, human movement, and fluctuating equipment states constantly introduce uncertainty.

Robots must not only predict outcomes, but also execute actions in real time, rapidly correct deviations, and maintain full operational controllability.

This can never be achieved by a standalone world model alone.

Perception, planning, control, execution feedback, and real-world data return must all work together to move from “simulation capability” to “practical deployment capability.”

This is why SEER Robotics has consistently emphasized:

A large model does not equal the complete embodied intelligence brain.

The same remains true today:

A world model is not the full embodied intelligence brain either.

Is VLA Really Obsolete? The Answer Is No

Now let us revisit the VLA paradigm.

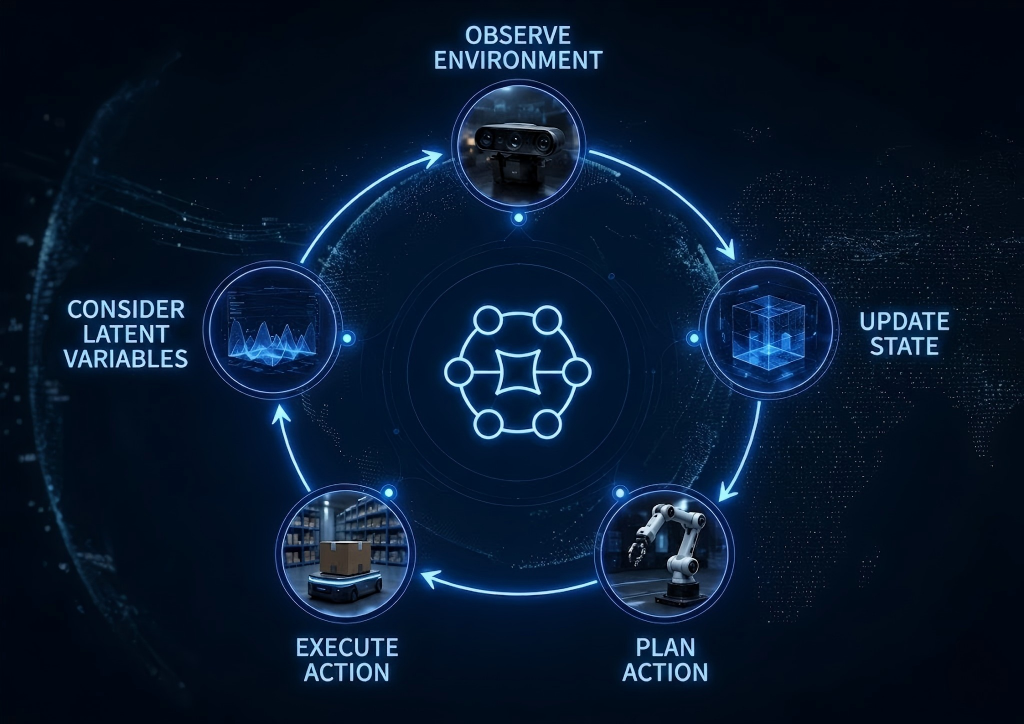

The reason VLA became a core framework for embodied intelligence is simple: it captured the essential closed loop of robotic tasks:

Perceive the environment → understand instructions → execute actions.

As long as robots still need to see the environment, understand tasks, and complete physical operations, the three pillars of Vision, Language, and Action will never disappear.

What will evolve is not VLA itself, but its organizational structure and future form.

Traditional VLA architectures primarily relied on linear mappings from perception to action, enabling robotics systems to move from fragmented modular pipelines toward unified intelligent workflows.

However, once deployed in real-world environments, their limitations became increasingly apparent:

- Highly dynamic environments

- Massive long-tail scenarios

- The inability to fully cover action consequences through labeled data alone

Robots must not only understand “what is happening now,” but also predict “what will happen next.”

And this is precisely where world models can significantly strengthen VLA systems.

Therefore, the emergence of world models does not signal the end of VLA. Instead, it pushes VLA from the stage of “perception-understanding-action” toward a new stage of “prediction-reasoning-execution.”

The relationship between the two is not replacement, but complementary integration and co-evolution.

The Real Key: Stop Obsessing Over Replacement Narratives

“World Models vs. VLA” may be an attractive headline, but reducing the discussion to a binary competition risks misleading the industry.

Embodied intelligence has never been a competition between isolated models.

It is fundamentally a systems engineering challenge:

- 🧠 VLA: Establishes the core pipeline for perception, language understanding, and action execution

- 🌍 World Models: Strengthen physical prediction, simulation, synthetic data generation, and generalization

- 🎮 Control Systems: Ensure real-time execution, low-latency feedback, operational stability, and safety boundaries

- 📊 Real-World Data: Continuously correct models and drive long-term system evolution

None of these components can replace the others.

The future of embodied intelligence will not belong exclusively to VLA, nor solely to world models. It will belong to integrated robotic systems that combine multiple capabilities into one unified architecture.

More important than choosing sides is building a true system-level closed loop.

Whoever can connect models, data, control systems, and real-world scenarios into a self-reinforcing flywheel will ultimately master the core of embodied intelligence.

Industrial Deployment Always Comes Back to Control Systems

This is also the most important perspective from SEER Robotics in this debate.

No matter how exciting the concepts become, robots must ultimately operate in the physical world.

And in industrial environments, there is no room for failure:

- A failed grasp means interrupted operations

- A path deviation may result in equipment collision

- A control delay creates safety risks

- Operational instability turns systems into demos rather than scalable products

This is why the embodied intelligence brain must ultimately be built upon control systems.

Models are responsible for understanding tasks.

World models are responsible for predicting consequences.

Control systems are responsible for converting abstract instructions into stable, real-time, and correctable physical actions.

SEER Robotics has always maintained that building a robot brain is never just about building larger models.

Without control systems, even the strongest models cannot truly enter industrial environments.

Without real-world data, even the most advanced technologies cannot continuously evolve.

Without standardized products and lightweight deployment, even the hottest concepts remain confined to laboratories.

So Where Is Embodied Intelligence Ultimately Headed?

Returning to the central question:

Where will embodied intelligence ultimately go—world models or VLA?

SEER Robotics offers a clear answer:

It is not a choice between the two.

The future of embodied intelligence will move beyond standalone VLA systems and beyond standalone world models. It will evolve toward a system-level robot brain built upon:

Models + Data + Control + Real-World Scenarios

✅ VLA: Enables robots to perceive environments, understand instructions, and execute tasks systematically

✅ World Models: Enable understanding of physical laws, prediction of action outcomes, and supplementation of data limitations

✅ Control Systems: Ensure operational stability, real-time correction, safety, and controllability

✅ Industrial Scenarios: Continuously generate high-value data that drives full-system evolution

This is the true long-term direction of embodied intelligence:

Not pursuing ever-larger models.

Not chasing the latest conceptual trends.

Not competing over technological labels.

But continuously building deep system-level capabilities.

For SEER Robotics, the focus is never on whether world models or VLA are “stronger.”

What matters is:

- Can the technology be deployed in industry?

- Can robots operate reliably?

- Can the entire solution scale efficiently?

World models are an important piece of the puzzle.

VLA is a critical foundation.

But both are only the beginning.

The true future of embodied intelligence will ultimately emerge in factories, logistics centers, warehouses, and countless real-world environments—continuously evolving through real operations and feedback loops.

SEER Robotics,All Robots. One Platform. Fully in Your Control

This means not only building larger models, but enabling truly mature robot brains to take root in industry, enter real-world scenarios, and empower every sector through practical intelligent robotics deployment.

/1.png?x-oss-process=image/format,webp)

/1.png?x-oss-process=image/format,webp)